Search data is split now. A page can rank in Google, get cited in an AI answer, and still miss the lead if the page is stale or hard to parse.

That is why a strong SEO stack 2026 moves beyond traditional SEO and its focus on single tools toward one loop for AI visibility. This loop is the key to comprehensive content optimization. Search Console shows demand, Bing AI shows citation visibility, schema gives machines context, and refresh cycles protect what already works. When you run them together, reporting gets cleaner and decisions get faster.

Key Takeaways

- Build your SEO stack 2026 around a closed loop: Search Console for demand and clicks, Bing AI for citation visibility, schema for machine context, and refresh cycles to protect performance.

- Track at the page level, not channels—give each important URL one scorecard with impressions, CTR, position, AI citations, conversions, and last update for cleaner reporting and faster decisions.

- Combine Google Search Console and Bing AI Performance reports side-by-side to spot gaps: strong impressions but weak citations mean tightening intros and schema for better AI extraction.

- Use schema matched to page type (e.g., Article, Product, FAQPage) with accurate dates to boost trust and citations—monitor enhancements in Search Console to avoid errors.

- Drive content refreshes with data triggers like 15% impressions drop or CTR decline, assigning cadences by page value (monthly for commercial, quarterly for evergreen) and re-indexing after updates.

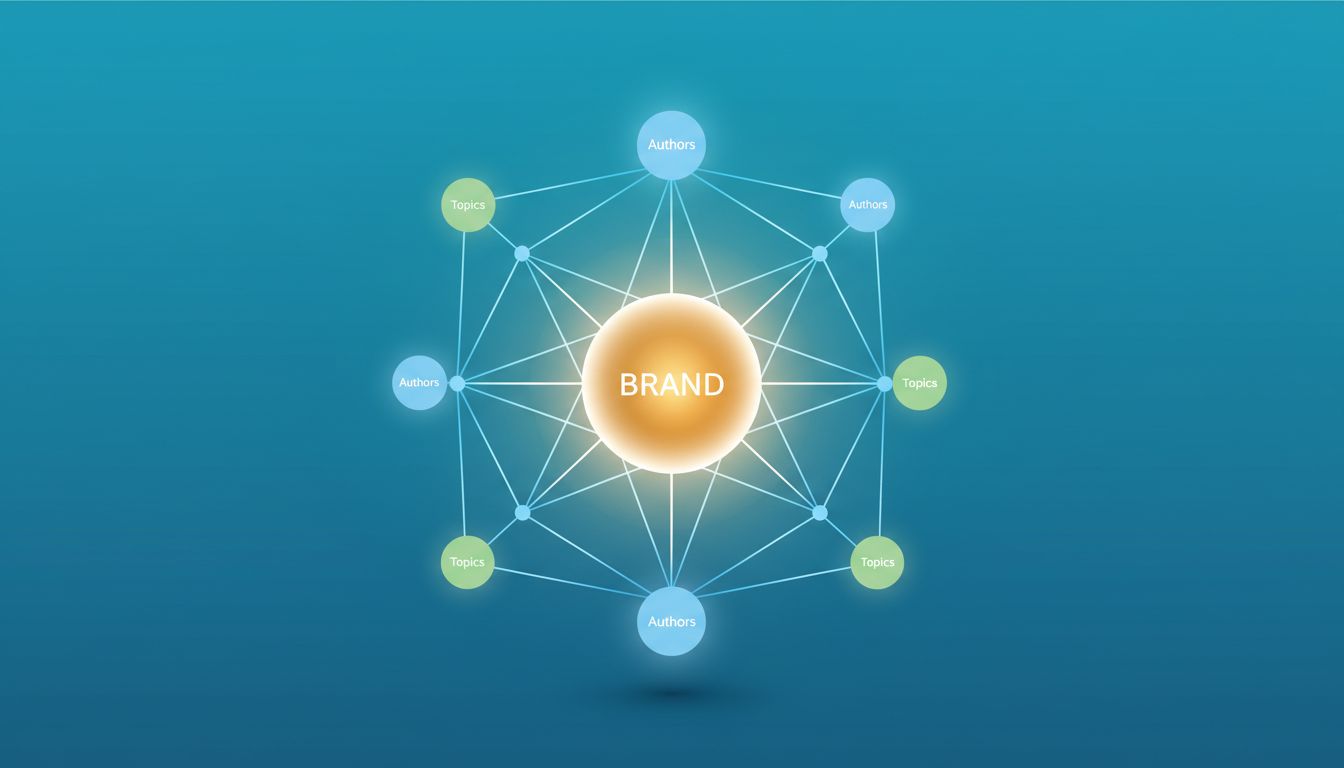

Treat the page as the unit that matters

Most teams still report by channel. That hides the real story. In 2026, the cleanest operating model starts at the URL level, improving ROI and driving MQLs and revenue. Every important page should have a search owner, a clear intent, a schema setup, and a refresh tier. Align content optimization with both search intent and user intent for the best results.

A service page should not live in one SEO report and another conversion report. It needs one scorecard. Track impressions, CTR, average position, AI citations, conversions, last update date, and insights from competitor analysis in the same row. Then you can see which pages attract attention, which ones earn trust, and which ones close business.

That single-page view turns tools into a stack instead of a pile of dashboards.

Track pages, not channels. One URL can earn clicks in Google, citations in Copilot, and leads weeks later.

Use Search Console and Bing AI as one visibility layer

Google Search Console is still the control room. The performance report shows which queries and pages win impressions, clicks, CTR, and average position. In May 2026, it also pays to watch essential technical SEO like mobile usability, Core Web Vitals, index coverage with your XML sitemap, and enhancement errors because Google is stricter about mobile experience and structured data.

Review page and query data together, not in isolation. If impressions climb while CTR falls, your snippet or search intent match is weak, especially in AI Overviews. If CTR is steady but position slips, competitors may have fresher or clearer pages. For publishers, brand searches and country-level shifts matter more now because Preferred Sources is now global, boosting overall brand visibility.

Bing adds the missing half across AI search engines. The AI Performance report in Bing Webmaster Tools shows which URLs get cited in Copilot, Bing AI summaries, and related surfaces. Treat citation trends as an AI visibility signal, not a rankings clone. A page with modest clicks can still shape buying research if AI answers keep using it.

Put the two views side by side, informed by keyword research and competitor analysis. In your data analysis workflow, use automated tasks for rank tracking and SERP intelligence. When Google impressions are strong but Bing citations are weak, tighten the page’s opening answer, simplify headings, and make facts easier to extract. When Bing citations rise but conversions lag, add stronger next steps and internal links from informational pages to commercial ones.

Schema is the translation layer

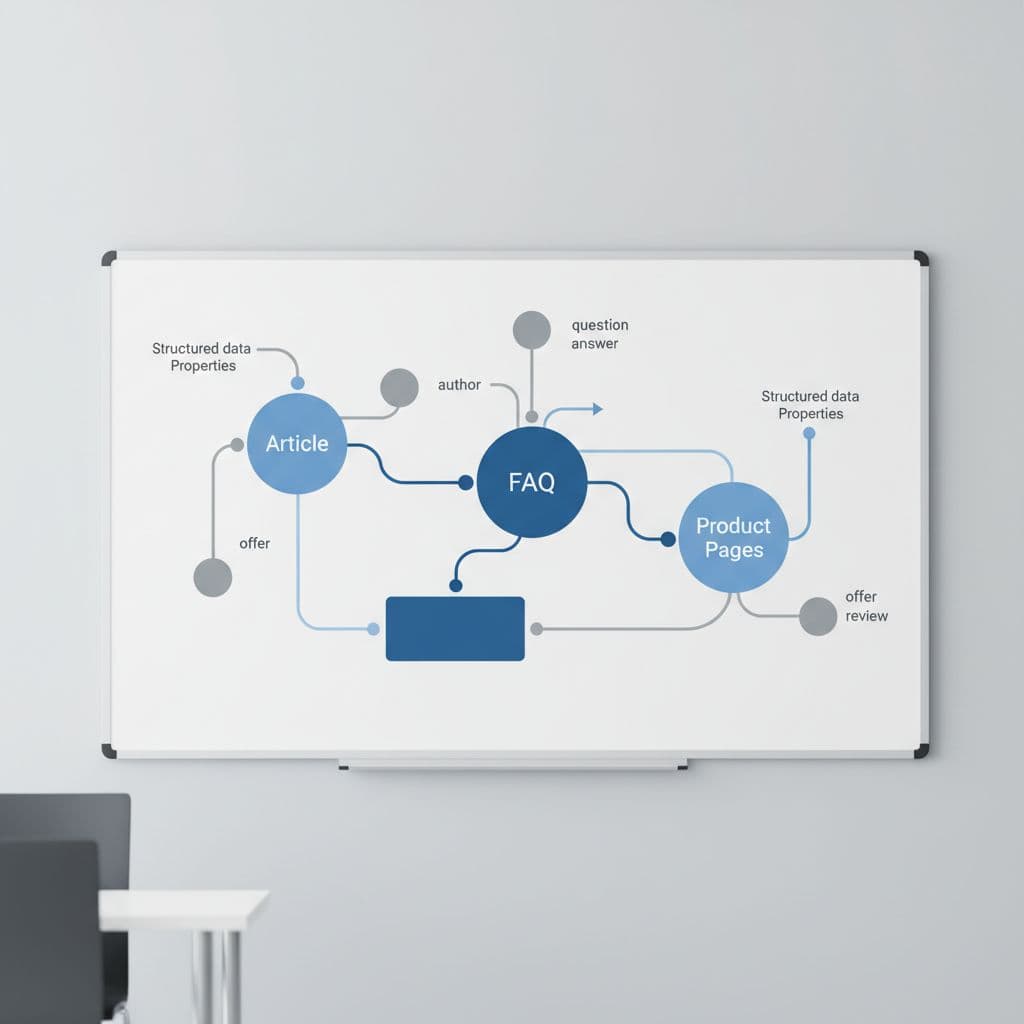

Schema, the primary form of structured data and the foundation for Generative Engine Optimization, helps search engines and AI systems understand what a page is, who published it, and how it fits the site. Use JSON-LD and keep it tied to the visible page content. The best approach is to match schema by page type, not to push one giant template across every URL. For even better results, use an AI writing assistant to generate accurate image alt text that aligns with your schema.

For publishers, start with Organization, WebSite, BreadcrumbList, and Article or BlogPosting. For service businesses, add LocalBusiness where location matters, then FAQPage only when the page already shows real questions and answers. Ecommerce pages need Product data. Author or Person markup also helps when trust in the writer matters.

Keep datePublished and dateModified accurate. Then watch Search Console enhancement reports for parse errors and rich result issues. Bad schema does not add trust. Clean schema, mapped to the right page type, ensures accurate citations by AI models.

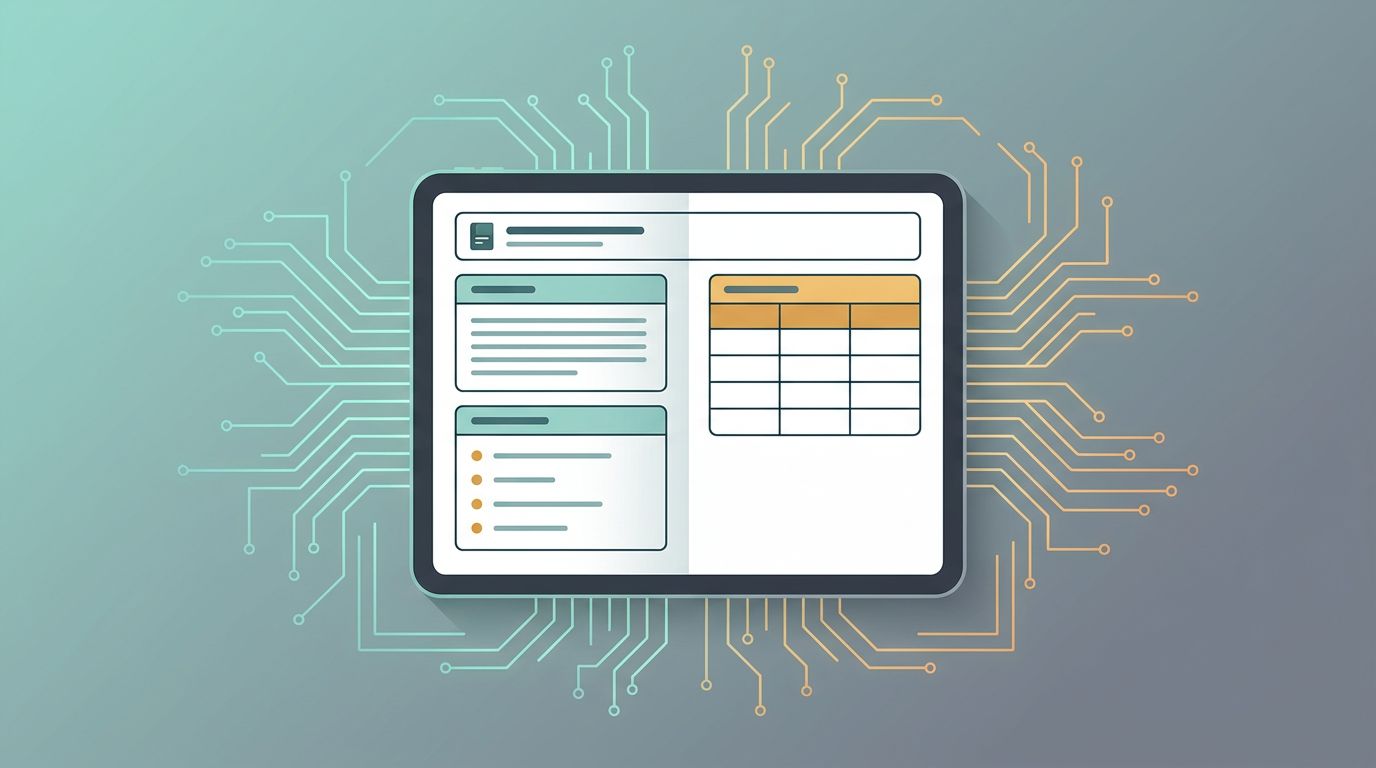

Build content refresh cycles from decay and value

Refresh work for content optimization should start with evidence, not a calendar reminder. Use Search Console first because impressions and position usually move before traffic tools show a drop. A good content refresh playbook starts by recording a 28-day and 90-day baseline for clicks, impressions, CTR, position, and conversions, incorporating keyword research and competitor analysis as core components. This approach builds topical authority while sustaining brand visibility through predictive SEO insights.

These are the triggers worth acting on, with automated tasks helping monitor them across the stack:

| Signal | Likely cause | Best move |

|---|---|---|

| Impressions down 15%+ over 3 months | Fresh competitors or lower demand | Update facts, examples, and missed subtopics |

| Impressions flat, CTR down | Snippet no longer matches intent | Rewrite title and meta, strengthen the intro, check schema |

| Position down, CTR flat | Page relevance is weakening | Rework headings, internal links, and supporting sections; include backlink analysis |

| Traffic stable, conversions down | Visitors want a different next step | Tighten the CTA, add proof, or split info and sales intent |

Then assign cadence by page value. Review top commercial pages every month. Check high-traffic evergreen guides each quarter. Leave low-value pages on a light annual pass unless decay is sharp. For updates, use a content editor that provides NLP-based suggestions to better address user intent. After a meaningful update, request re-indexing and give the page about 28 days before you judge the result, aided by your content editor tools.

This cadence helps AI visibility too. Fresh pages with direct answers, updated examples, and clear structure are easier for both search engines and answer engines to trust.

Frequently Asked Questions

What is the SEO stack 2026?

The SEO stack 2026 integrates Search Console, Bing AI, schema, and refresh cycles into one loop for AI visibility and content optimization. It shifts from channel-based reporting to page-level scorecards, combining demand signals, citation trends, machine context, and decay protection. This setup delivers cleaner reporting, faster decisions, and sustained revenue from search and AI.

Why track SEO at the page level instead of channels?

Page-level tracking reveals the full story: one URL can earn Google clicks, Copilot citations, and conversions weeks later. A single scorecard per page merges impressions, CTR, position, AI visibility, and updates, turning tools into a unified stack. This improves ROI, aligns with intent, and makes optimization actionable.

How do Search Console and Bing AI work together?

Google Search Console shows impressions, clicks, CTR, and position plus technical issues like Core Web Vitals. Bing AI Performance reveals citations in Copilot and summaries as an AI visibility signal. Use them side-by-side with keyword research—if GSC is strong but Bing weak, simplify structure and schema for better extraction.

What schema should I use for my pages?

Match schema to page type: Organization/WebSite for publishers, LocalBusiness/FAQPage for services, Product for ecommerce. Use JSON-LD tied to visible content with accurate datePublished/dateModified. Monitor Search Console enhancements for errors—clean schema ensures trust and accurate AI citations.

How do I set up content refresh cycles?

Start with 28/90-day baselines from Search Console for impressions, CTR, position, and conversions. Trigger updates on signals like 15% impressions drop (update facts) or CTR decline (rewrite snippet). Assign cadences by value—monthly for top pages, quarterly for evergreen—and re-index after changes, judging results in 28 days.

Conclusion

The SEO stack 2026 works when the loop is closed. It balances traditional SEO with the nuances of AI search engines. Search Console shows demand and click behavior, Bing AI shows citation visibility that drives higher AI visibility and more brand mentions, schema provides context, and refresh cycles protect revenue pages from decay.

The strongest setup in 2026 is a page-based system. If each important URL has one scorecard, one owner, and one refresh rule, your search program gets easier to run, harder to fool, and delivers more reliable rank tracking results for modern marketers.