If you’re still running the 2020 SEO playbook, you’re already losing ground. SEO in 2026 redefines search engine optimization beyond one website, one keyword list, and one ranking report.

Visibility now spans Google, YouTube, Reddit, LinkedIn, and AI search tools like ChatGPT, Perplexity, Claude, and Gemini. The brands that win show up everywhere, keep content fresh, and use AI to move faster without handing quality over to machines.

These SEO tips deliver the essential tactics for the modern era. Understanding search intent forms the foundation of this 2026 playbook, satisfying user needs across all platforms.

The shift is big, but the playbook is clear.

Key Takeaways

- Combine traditional SEO with AI and multi-platform optimization: Google remains dominant, but visibility across YouTube, Reddit, LinkedIn, and AI tools like ChatGPT and Perplexity is essential—don’t optimize for one channel alone.

- Distribute high-quality content everywhere: Start with pillar pages on your site, repurpose into videos, social posts, and community answers to build authority signals that lift rankings across search engines.

- Prioritize freshness: Publish weekly, audit quarterly, and track trends monthly—95% of AI citations come from content under 10 months old, making updates a core ranking factor.

- Leverage AI workflows with human review: Use agents for repurposing, keyword research, and page building to scale output, but humans must add judgment, facts, and voice to avoid thin content penalties.

- Play the long game with expanded metrics: Commit 12 months for compounding traffic, tracking Google performance plus AI mentions, sentiment, and competitive share beyond just rankings and visits.

5 SEO Tips and Shifts That Matter Most Right Now

A lot of old SEO advice still works in parts. The problem is that it no longer works by itself. You can still rank on Google with solid pages, good internal linking, and helpful content. But if that’s your full strategy, you’re leaving attention, citations, and branded discovery to everyone else.

The current playbook has five parts:

- Traditional search engine optimization still matters, but it has to work alongside AI search optimization.

- High-quality content remains the prerequisite for any distribution strategy across multiple platforms, not only your site.

- Freshness matters more than most teams think, especially for AI citations.

- AI workflows can multiply output, but human review still decides quality.

- Organic traffic compounds over time, so you need better metrics and a longer time horizon.

This approach isn’t theory. It’s based on a model that has produced hundreds of millions of organic visits and substantial revenue from search-led growth. It also lines up with how enterprise teams are shifting spend, because AI visibility is starting to sit closer to brand awareness than old-school rank tracking.

If you want one simple takeaway up front, it’s this:

If you’re only optimizing for Google, you’re leaving a large share of discovery on the table.

Traditional SEO still matters, but it no longer stands alone

Search hasn’t moved away from Google. It has widened around it.

Google still handles an enormous volume of daily searches. In the figures shared here, Google gets about 13.7 billion searches per day, while ChatGPT gets about 1 billion. That means Google is still much larger. So no, traditional SEO is not dead.

But relying on traditional SEO alone is where teams get into trouble.

Some marketers have gone all-in on AI search and stopped caring about Google’s core mechanics. Others have ignored AI search and wondered why branded discovery is getting weaker. Both are wrong. In 2026, the better model is traditional SEO plus AI SEO, or what many teams now call answer engine optimization and generative engine optimization.

A simple comparison makes the shift easier to see:

| Channel | Daily search volume shared here | What it means |

|---|---|---|

| 13.7 billion | Still the biggest source of demand and the base layer for visibility | |

| ChatGPT | 1 billion | Smaller today, but growing fast and shaping discovery behavior |

Google still matters because AI systems often pull from the web pages that already rank, get cited, and show authority signals, including strong pages that compete for featured snippets within the SERPs. AI Overviews also depend on the same web ecosystem, where internal links and schema markup help bots parse site data effectively. If your site lacks solid technical SEO, such as page speed, or is thin or hard to trust with weak E-E-A-T, that weakness usually shows up in both places.

At the same time, AI search changes the rules around freshness. One of the most important numbers in this playbook is that 95% of AI citations come from content published or updated within the last 10 months. If your best content is two years old and untouched, your brand may be easy to find in Google, yet nearly absent from AI answers.

That creates a new operating model. You still need strong on-site SEO, but you also need current content, outside mentions, and signals that stretch beyond your own domain.

There’s one more layer that many teams miss. AI visibility is pulling money from brand budgets, not only SEO budgets. That makes sense because leadership increasingly cares about questions like these:

- Are we mentioned in ChatGPT, Perplexity, Claude, and Gemini?

- Are those mentions positive or negative?

- Do we appear more often than competitors?

Those are brand questions as much as search questions.

Because of that, the smart move is not to split SEO and AI into separate programs. Build one system. Keep your technical basics in place, including crawlability, site speed, structured data, and trust signals on the page. Then extend that system into AI visibility. If you want a deeper look at that broader shift, Epic Webcrafts has a useful GEO strategies for AI search resource hub, and WebFX has a solid piece on adapting SEO for AI search.

Post everywhere with a distribution workflow

The biggest miss in most SEO programs is distribution. Teams still publish a blog post, wait for Google to rank it, and hope that traffic follows. That worked far better 10 or 15 years ago than it does now. This workflow fixes that by building authority across platforms where your audience actually searches.

Today, search is spread across platforms. People research on YouTube. They compare vendors on Reddit. They follow industry takes on LinkedIn and X. They ask AI tools for summaries, options, and recommendations. If your content only lives on your site, you’re present in one place while attention is scattered across many.

YouTube matters for more than reach. It’s the second-largest search engine, it’s owned by Google, and Gemini can crawl it. LinkedIn posts are crawlable. Instagram posts can show up in Google too. Meanwhile, large language models often cite sources like YouTube, Reddit, and Wikipedia. So the content that earns attention outside your site can lift visibility inside search as well, and cross-platform visibility helps generate backlinks and external links naturally.

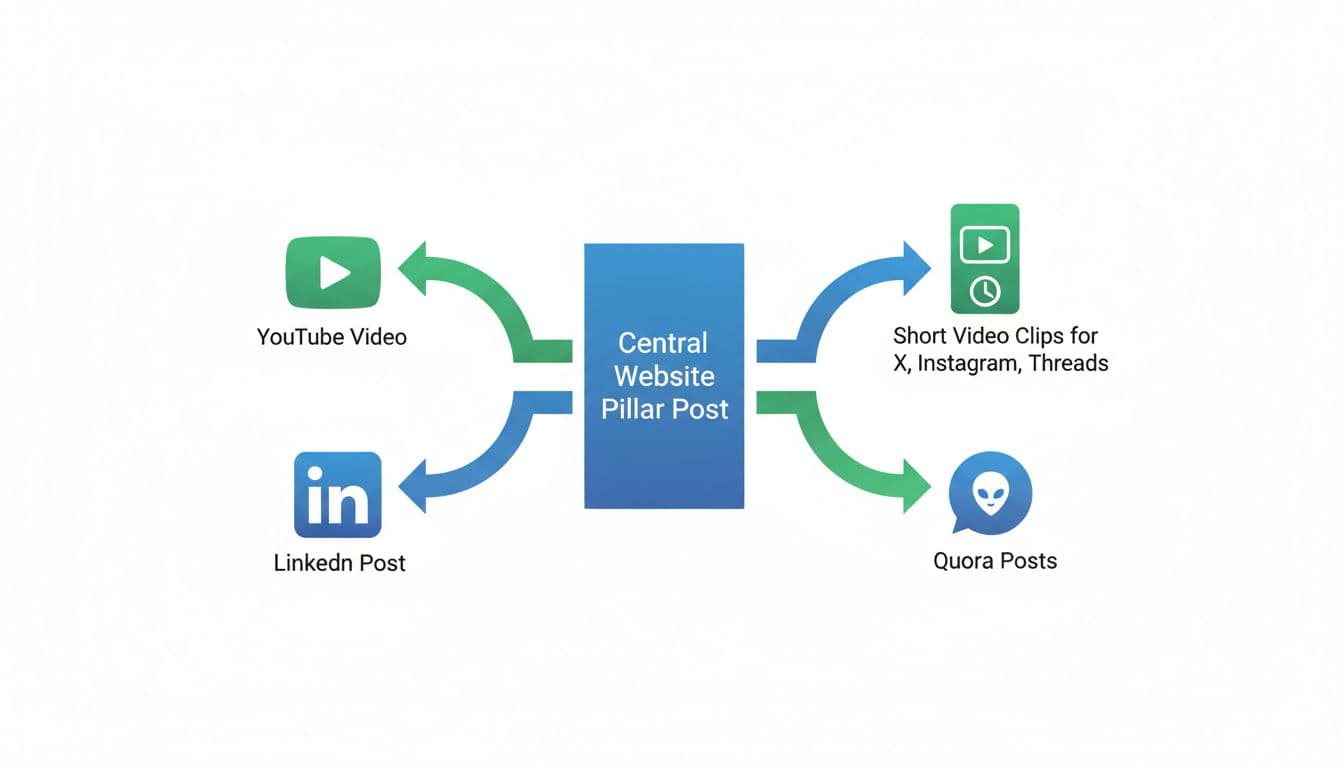

A practical workflow looks like this:

- Start with high-quality content as a pillar page on your website, integrated into a logical site structure that targets long-tail keywords. In many cases, that means a 2,000-word or longer article built for people first. The page should answer the core question clearly, include original examples, and make the next step obvious.

- Turn that page into a video. You can script it yourself, or use AI to draft a script based on the article and your speaking style. Then record it in a studio or from home. Once it hits YouTube, you’re not only publishing content, you’re entering another search engine.

- Repurpose the same idea into a native LinkedIn post. This part matters. Don’t paste your article into LinkedIn and call it done. Write for the feed. Strong hooks, clean spacing, short paragraphs, and platform-appropriate tone matter more than word count.

- Cut the video into shorter clips for X, Instagram, and Threads. A tool like Opus can help find good moments. For any image assets like thumbnails used across platforms, include descriptive alt text. Those clips give the original idea more mileage and create more branded surfaces across the web.

- Join the communities where people ask real questions. Reddit and Quora still matter here. Answer with useful detail. Sometimes it makes sense to link back to your site. Most of the time, the real win is building a trail of helpful contributions.

This is also where platform fit matters. LinkedIn rewards business framing. X allows more opinionated commentary. YouTube depends on packaging, retention, and topic selection. A format that performs on one channel may fall flat on another.

That repackaging step can be systemized. One tool mentioned in this workflow is Stanley, which looks at winning content formats and suggests similar structures for your own posts. In one example, a repurposed post drew 150,000 views on LinkedIn and 50,000 on X. The point isn’t the number. The point is that content often performs better when it’s reworked for the channel instead of copied into it.

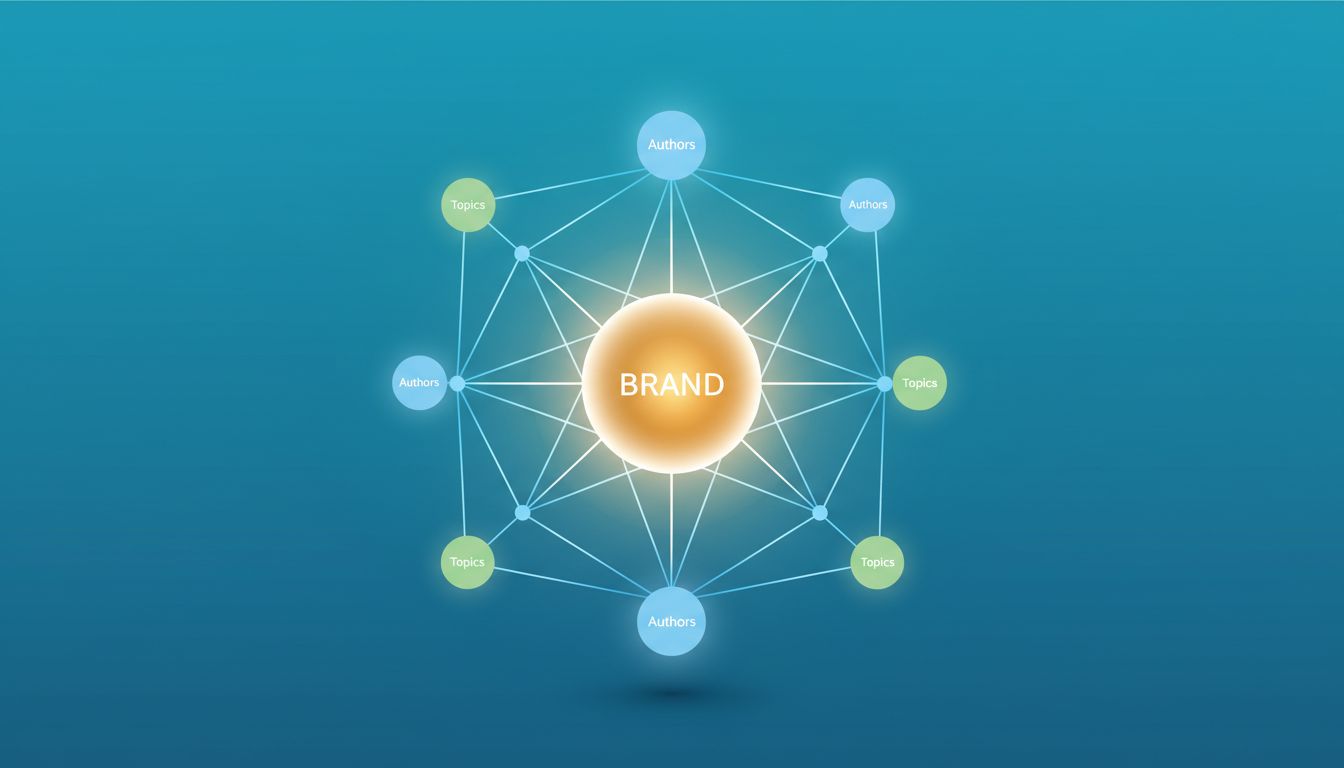

This kind of distribution has another advantage. When someone searches a topic and sees your brand on your website, YouTube, LinkedIn, and Reddit at the same time, your authority looks stronger. Google tracks entities, not only pages. AI systems also pay attention to what other sites say about you, not only what you say about yourself.

Freshness is now part of ranking

A lot of teams publish content like it’s a one-time asset. They write it, optimize it, and move on. That model is weak in 2026.

The more current rule is simple: publish often, then update often. Freshness now affects visibility across both Google and AI search, and AI systems seem to reward recency even more heavily than classic search did.

This old-versus-new view captures the change:

| Old playbook | New playbook |

|---|---|

| Publish once, rank for a long time | Publish often and keep refreshing |

| Focus on static keyword targets | Track topic momentum and update around it |

| Treat updates as occasional maintenance | Build refreshes into the content system |

The system described here has three parts.

First, publish weekly. The target is three to five new pieces of content per week. That mix should include deeper pillar pages and faster posts in the 500 to 800-word range. The goal is not volume for its own sake. The goal is to keep building relevance around timely questions while deepening your core topics.

Second, run quarterly content audits. Pull your top 20 pages and improve them. Add new data. Replace dated examples. Expand missing sections. Add internal links to keep link equity flowing to updated pages. Add FAQs if they help the reader. Refresh the publish date where that reflects a real update, which also helps avoid duplicate content issues. Then re-promote the improved page across your other channels.

Third, check trends every month. Google Search Console and Ahrefs can help you monitor topic momentum and spot terms that are climbing before they show strong volume. That matters because breakout topics often look small right before they become crowded, letting you identify shifting search intent early. “LLM personalization” was given as one example of a topic that might have little demand today and far more demand six months later.

If your content is stale, AI search may treat your brand as stale too.

This is one place where content operations matter more than one-off brilliance. A clean refresh process beats a few heroic publishing sprints followed by silence. Tools like ClickFlow for AI-powered SEO content are built around that idea, helping teams update content, add internal links, and produce stronger drafts faster.

A broader 2026 view from Loamly’s AI SEO guide supports the same pattern. Start with a baseline, watch where your brand appears in AI answers, and keep feeding the system with useful, current content.

Build AI workflows that increase output, with humans in the loop

Manual content teams now compete against teams that can turn one long-form asset into dozens of finished pieces in minutes. That doesn’t mean quality no longer matters. It means speed is no longer optional.

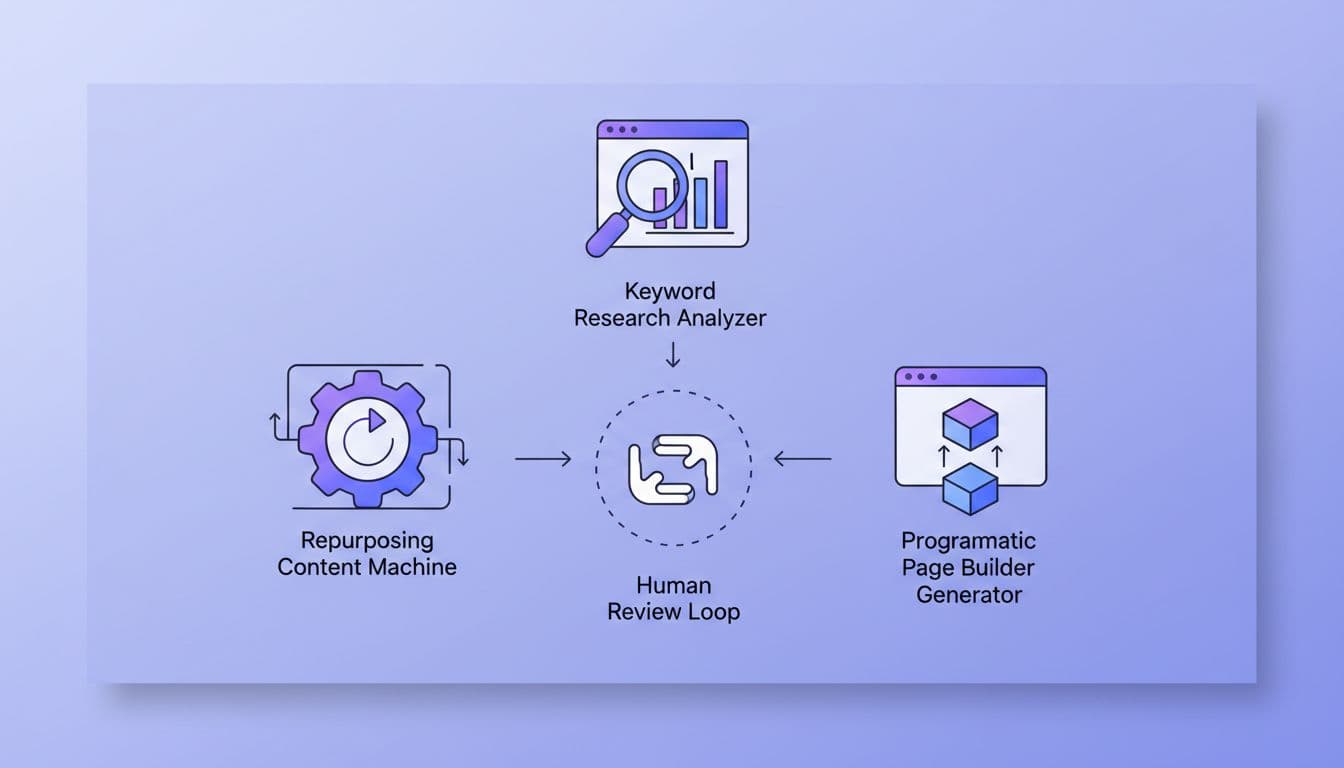

The workflow described here centers on an AI command setup built with tools like Cursor, Claude Code, and MCPs, short for model context protocols. The purpose isn’t full automation. It’s to let specialized agents handle the repetitive 80 to 90 percent so people can spend time on judgment, editing, and promotion.

### Start with three useful sub-agents

The first agent is a repurposing agent. Feed it a podcast episode, a webinar, a pillar page, or a YouTube recording. It can return short-form clip ideas, social posts for different channels, email angles, and even a score for how strong each concept may be. In the example given, one 80-minute podcast became more than 35 pieces of content in a couple of minutes.

The second agent is a keyword research agent. Instead of clicking through SEO tools by hand, the agent connects data sources and looks for useful patterns. It can pull from Ahrefs, Google Analytics, Search Console, and even CRM or sales data. That makes it easier to spot low-volume terms with future upside, B2B transactional keywords, gaps against competitors, and opportunities that line up with revenue instead of vanity traffic.

The third agent is a programmatic page builder. This is useful when you have a valid template and many variations, such as location pages or “best X for Y” pages. Give the agent a working layout, a set of data inputs, and the page type you want. It can build the first draft at scale, often getting the page 80 to 90 percent done before a human touches it.

Human review is still the difference-maker

This is the part that separates strong AI-assisted marketing from cheap AI output. Humans must ensure the output meets user experience standards.

AI still hallucinates. It still invents data, smooths away edge cases, and defaults to generic phrasing. Google knows what low-value AI content looks like. LLMs pick up the same thin patterns.

So the better workflow keeps people in the approval loop:

- AI generates the first draft.

- A human checks every factual claim.

- A human adds internal links with refined anchor text and supporting context.

- A human verifies mobile optimization and Core Web Vitals.

- A human shapes the piece for AI Overviews and extraction.

- A human adds judgment, voice, and original perspective.

- Then the team publishes and distributes it.

That last part matters more than most teams admit. The market is already flooded with clean-looking content that says nothing new. Human review is where you add the examples, sharp opinions, client reality, and buyer context that machines still flatten.

There are also a few extra tips worth carrying into this workflow from other current guidance. Structured data helps machines interpret your pages. Visible trust signals help both readers and AI systems. Original examples make your content easier to cite. Panovista’s write-up on AI SEO strategies for 2026 also points to question-based discovery, schema, and AI-friendly formatting as part of the mix.

Play the long game and track the right metrics

The reason many SEO programs fail isn’t strategy. It’s timing.

Teams publish for two weeks, see little movement, and stop. That almost guarantees disappointment. Search-led growth compounds, and the compounding window is longer than most teams want it to be.

The commitment laid out here follows a simple arc. Months one through three are for building the base. Publish at least three times per week, create 10 to 15 pillar pieces, stand up AI workflows, and start posting across the channels your market already uses. Months four through six are for scale. Move closer to five pieces per week, begin refresh cycles, and double down on formats that produce traction. Months seven through 12 are where things often start to stack. Daily publishing becomes possible, rankings improve, AI citations increase, and traffic grows faster because the system has more surfaces to work with; monitor technical details like canonical URLs during this phase to support efficient compounding.

The measurement model has to change too. Older SEO scorecards focused on traffic, backlinks, and keyword positions. Those still matter, especially conversions. But they no longer tell the whole story.

A better 2026 scorecard includes:

- Google performance, tracked via Google Search Console for backlinks, organic traffic, and click-through rate, with emphasis on conversions, not raw visits alone

- AI mentions, across ChatGPT, Perplexity, Claude, and Gemini

- Sentiment, so you can tell whether those mentions help or hurt brand perception (especially in local SEO for physical businesses)

- Competitive share, which shows whether your brand appears more often than rivals

This is why some companies are moving spend from classic SEO teams toward brand teams. AI visibility behaves more like share of voice than a simple rank chart. You are not only chasing clicks. You are trying to become one of the sources that gets cited when buyers ask broad, messy, high-intent questions.

Tools mentioned in this workflow include ClickFlow, Ahrefs, Semrush, and LLM visibility trackers such as Amplitude and Ahrefs Brand Radar. The exact stack matters less than the habit behind it. Measure the full picture, keep publishing, and stick with the program long enough for it to compound.

Frequently Asked Questions

Is traditional SEO dead in 2026?

No, Google still handles 13.7 billion daily searches versus 1 billion for ChatGPT, making solid on-site tactics like technical SEO, internal links, and E-E-A-T essential. But it must pair with AI search and multi-platform distribution, as AI often cites top-ranking pages while rewarding freshness and external signals more heavily.

How should I distribute my content across platforms?

Build a workflow starting with a high-quality pillar page on your site, then repurpose into YouTube videos, native LinkedIn posts, short clips for X/Instagram/Threads, and helpful answers on Reddit/Quora. This creates entity authority, generates backlinks, and boosts visibility where users actually search, with platform-specific formatting for better engagement.

Why does content freshness matter more now?

AI systems cite 95% of sources from the last 10 months, and Google favors recency too—stale content risks invisibility in answers and overviews. Publish 3-5 pieces weekly, run quarterly audits on top pages with new data and links, and monitor monthly trends via Search Console or Ahrefs to stay ahead of shifting intent.

How can AI help my SEO without sacrificing quality?

Use sub-agents for repurposing assets, keyword research from multiple tools, and drafting templated pages to handle 80-90% of repetitive work. Always loop in humans to fact-check, add original insights, internal links, and trust signals—AI floods the market with generic output, but human judgment ensures citation-worthy, user-focused content.

What metrics should I track for 2026 SEO success?

Beyond traffic and rankings, monitor AI mentions in ChatGPT/Perplexity/Claude/Gemini, sentiment of those appearances, and competitive share of voice. Use Google Search Console for core performance, plus tools like Ahrefs or Amplitude for the full picture—focus on conversions and long-term compounding over short-term spikes.

Final thoughts

The best SEO tips in 2026 are less about hacks and more about operating model. You still need strong pages with optimized title tags and meta descriptions to define context for all search engines, plus sound technical SEO. But you also need current content, wide distribution, AI-assisted production, and a system for measuring visibility beyond Google alone.

The strongest brands won’t win because they published the most pages. They’ll win because they showed up where buyers search, kept their content fresh, and added human judgment where AI falls short.

If your team can do that for a full year, compounding visibility becomes much easier to earn.